In lots of organizations, as soon as the work has been achieved to combine a

new system into the mainframe, say, it turns into a lot

simpler to work together with that system through the mainframe relatively than

repeat the combination every time. For a lot of legacy programs with a

monolithic structure this made sense, integrating the

similar system into the identical monolith a number of instances would have been

wasteful and sure complicated. Over time different programs start to succeed in

into the legacy system to fetch this information, with the originating

built-in system typically “forgotten”.

Often this results in a legacy system changing into the one level

of integration for a number of programs, and therefore additionally changing into a key

upstream information supply for any enterprise processes needing that information.

Repeat this strategy a couple of instances and add within the tight coupling to

legacy information representations we regularly see,

for instance as in Invasive Vital Aggregator, then this could create

a major problem for legacy displacement.

By tracing sources of knowledge and integration factors again “past” the

legacy property we are able to typically “revert to supply” for our legacy displacement

efforts. This will permit us to scale back dependencies on legacy

early on in addition to offering a chance to enhance the standard and

timeliness of knowledge as we are able to carry extra fashionable integration methods

into play.

Additionally it is value noting that it’s more and more important to grasp the true sources

of knowledge for enterprise and authorized causes similar to GDPR. For a lot of organizations with

an intensive legacy property it is just when a failure or situation arises that

the true supply of knowledge turns into clearer.

How It Works

As a part of any legacy displacement effort we have to hint the originating

sources and sinks for key information flows. Relying on how we select to slice

up the general downside we might not want to do that for all programs and

information directly; though for getting a way of the general scale of the work

to be achieved it is extremely helpful to grasp the principle

flows.

Our goal is to supply some sort of knowledge movement map. The precise format used

is much less vital,

relatively the important thing being that this discovery does not simply

cease on the legacy programs however digs deeper to see the underlying integration factors.

We see many

structure diagrams whereas working with our shoppers and it’s shocking

how typically they appear to disregard what lies behind the legacy.

There are a number of methods for tracing information via programs. Broadly

we are able to see these as tracing the trail upstream or downstream. Whereas there’s

typically information flowing each to and from the underlying supply programs we

discover organizations are inclined to assume in phrases solely of knowledge sources. Maybe

when considered via the lenses of the legacy programs this

is essentially the most seen a part of any integration? It isn’t unusual to

discover the movement of knowledge from legacy again into supply programs is the

most poorly understood and least documented a part of any integration.

For upstream we regularly begin with the enterprise processes after which try

to hint the movement of knowledge into, after which again via, legacy.

This may be difficult, particularly in older programs, with many various

mixtures of integration applied sciences. One helpful method is to make use of

is CRC playing cards with the purpose of making

a dataflow diagram alongside sequence diagrams for key enterprise

course of steps. Whichever method we use it’s critical to get the appropriate

folks concerned, ideally those that initially labored on the legacy programs

however extra generally those that now help them. If these folks aren’t

out there and the data of how issues work has been misplaced then beginning

at supply and dealing downstream could be extra appropriate.

Tracing integration downstream will also be extraordinarily helpful and in our

expertise is usually uncared for, partly as a result of if

Characteristic Parity is in play the main focus tends to be solely

on current enterprise processes. When tracing downstream we start with an

underlying integration level after which attempt to hint via to the

key enterprise capabilities and processes it helps.

Not in contrast to a geologist introducing dye at a doable supply for a

river after which seeing which streams and tributaries the dye ultimately seems in

downstream.

This strategy is very helpful the place data concerning the legacy integration

and corresponding programs is briefly provide and is very helpful after we are

creating a brand new element or enterprise course of.

When tracing downstream we’d uncover the place this information

comes into play with out first realizing the precise path it

takes, right here you’ll doubtless wish to evaluate it towards the unique supply

information to confirm if issues have been altered alongside the way in which.

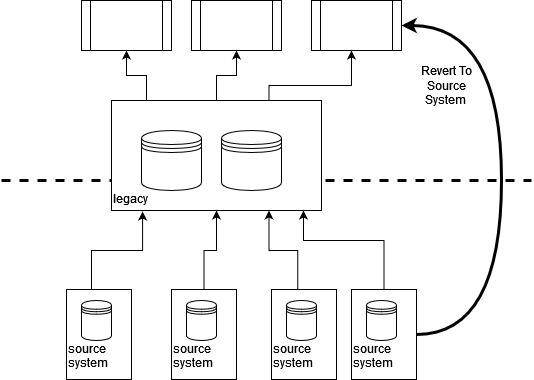

As soon as we perceive the movement of knowledge we are able to then see whether it is doable

to intercept or create a replica of the information at supply, which might then movement to

our new answer. Thus as a substitute of integrating to legacy we create some new

integration to permit our new parts to Revert to Supply.

We do want to ensure we account for each upstream and downstream flows,

however these do not should be applied collectively as we see within the instance

beneath.

If a brand new integration is not doable we are able to use Occasion Interception

or much like create a replica of the information movement and route that to our new element,

we wish to try this as far upstream as doable to scale back any

dependency on current legacy behaviors.

When to Use It

Revert to Supply is most helpful the place we’re extracting a selected enterprise

functionality or course of that depends on information that’s in the end

sourced from an integration level “hiding behind” a legacy system. It

works greatest the place the information broadly passes via legacy unchanged, the place

there’s little processing or enrichment taking place earlier than consumption.

Whereas this may increasingly sound unlikely in follow we discover many instances the place legacy is

simply performing as a integration hub. The primary modifications we see taking place to

information in these conditions are lack of information, and a discount in timeliness of knowledge.

Lack of information, since fields and components are often being filtered out

just because there was no approach to characterize them within the legacy system, or

as a result of it was too pricey and dangerous to make the modifications wanted.

Discount in timeliness since many legacy programs use batch jobs for information import, and

as mentioned in Vital Aggregator the “secure information

replace interval” is usually pre-defined and close to inconceivable to vary.

We will mix Revert to Supply with Parallel Operating and Reconciliation

with the intention to validate that there is not some further change taking place to the

information inside legacy. This can be a sound strategy to make use of on the whole however

is very helpful the place information flows through totally different paths to totally different

finish factors, however should in the end produce the identical outcomes.

There will also be a robust enterprise case to be made

for utilizing Revert to Supply as richer and extra well timed information is usually

out there.

It is not uncommon for supply programs to have been upgraded or

modified a number of instances with these modifications successfully remaining hidden

behind legacy.

We have seen a number of examples the place enhancements to the information

was really the core justification for these upgrades, however the advantages

had been by no means totally realized because the extra frequent and richer updates may

not be made out there via the legacy path.

We will additionally use this sample the place there’s a two approach movement of knowledge with

an underlying integration level, though right here extra care is required.

Any updates in the end heading to the supply system should first

movement via the legacy programs, right here they might set off or replace

different processes. Fortunately it’s fairly doable to separate the upstream and

downstream flows. So, for instance, modifications flowing again to a supply system

may proceed to movement through legacy, whereas updates we are able to take direct from

supply.

You will need to be conscious of any cross useful necessities and constraints

that may exist within the supply system, we do not wish to overload that system

or discover out it’s not relaiable or out there sufficient to straight present

the required information.

Retail Retailer Instance

For one retail consumer we had been in a position to make use of Revert to Supply to each

extract a brand new element and enhance current enterprise capabilities.

The consumer had an intensive property of retailers and a extra lately created

web page for on-line procuring. Initially the brand new web site sourced all of

it is inventory info from the legacy system, in flip this information

got here from a warehouse stock monitoring system and the retailers themselves.

These integrations had been achieved through in a single day batch jobs. For

the warehouse this labored fantastic as inventory solely left the warehouse as soon as

per day, so the enterprise may make certain that the batch replace acquired every

morning would stay legitimate for about 18 hours. For the retailers

this created an issue since inventory may clearly depart the retailers at

any level all through the working day.

Given this constraint the web site solely made out there inventory on the market that

was within the warehouse.

The analytics from the positioning mixed with the store inventory

information acquired the next day made clear gross sales had been being

misplaced consequently: required inventory had been out there in a retailer all day,

however the batch nature of the legacy integration made this inconceivable to

benefit from.

On this case a brand new stock element was created, initially to be used solely

by the web site, however with the purpose of changing into the brand new system of file

for the group as a complete. This element built-in straight

with the in-store until programs which had been completely able to offering

close to real-time updates as and when gross sales befell. In actual fact the enterprise

had invested in a extremely dependable community linking their shops so as

to help digital funds, a community that had loads of spare capability.

Warehouse inventory ranges had been initially pulled from the legacy programs with

long term purpose of additionally reverting this to supply at a later stage.

The top end result was an internet site that would safely supply in-store inventory

for each in-store reservation and on the market on-line, alongside a brand new stock

element providing richer and extra well timed information on inventory actions.

By reverting to supply for the brand new stock element the group

additionally realized they may get entry to far more well timed gross sales information,

which at the moment was additionally solely up to date into legacy through a batch course of.

Reference information similar to product traces and costs continued to movement

to the in-store programs through the mainframe, completely acceptable given

this modified solely occasionally.